This article continues my review of Robophobia by Professor Andrew Woods. See here for Part 1.

I want to start off Part 2 with a quote from Andrew Woods in the Introduction to his article, Robophobia, 93 U. Colo. L. Rev. 51 (Winter, 2022). Footnotes omitted.

Deciding where to deploy machine decision-makers is one of the most important policy questions of our time. The crucial question is not whether an algorithm has any flaws, but whether it outperforms current methods used to accomplish a task. Yet this view runs counter to the prevailing reactions to the introduction of algorithms in public life and in legal scholarship. Rather than engage in a rational calculation of who performs a task better, we place unreasonably high demands on robots. This is robophobia – a bias against robots, algorithms, and other nonhuman deciders.

Robophobia is pervasive. In healthcare, patients prefer human diagnoses to computerized diagnoses, even when they are told that the computer is more effective. In litigation, lawyers are reluctant to rely on – and juries seem suspicious of – [*56] computer-generated discovery results, even when they have been proven to be more accurate than human discovery results. . . .

In many different domains, algorithms are simply better at performing a given task than people. Algorithms outperform humans at discrete tasks in clinical health, psychology, hiring and admissions, and much more. Yet in setting after setting, we regularly prefer worse-performing humans to a robot alternative, often at an extreme cost.

Woods, Id. at pgs. 55-56

Bias Against AI in Electronic Discovery

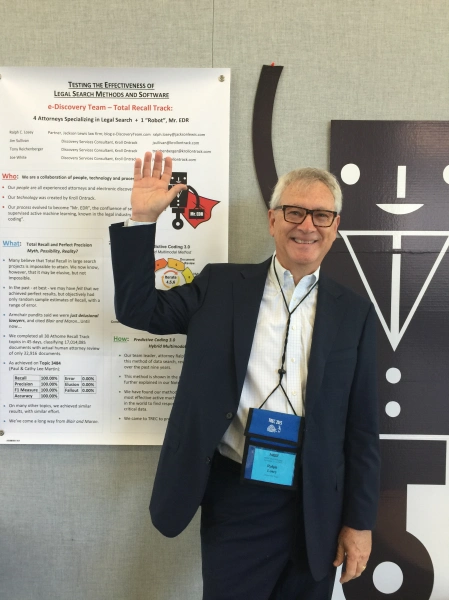

Electronic discovery is a good example of the regular preference of worse-performing humans to a robot alternative, often at an extreme cost. There can be no question now that any decent computer assisted method will significantly outperform human review. We have made great progress in the law through the outstanding leadership of many lawyers and scientists in the field of ediscovery, but there is still a long way to go to convince non-specialists. Professor Woods understands this well and cites many of the leading legal experts on this topic at footnotes 137 to 148. Even though I am not included in his footnotes of authorities (what do you expect, the article was written by a mere human, not an AI), I reproduce them below in the order cited as a grateful shout-out to my esteemed colleagues.

- Maura R. Grossman & Gordon V. Cormack, Technology-Assisted Review in E-Discovery Can Be More Effective and More Efficient than Exhaustive Manual Review, 17 Rich. J.L. & Tech. 1 (2011).

- Sam Skolnik, Lawyers Aren’t Taking Full Advantage of AI Tools, Survey Shows, Bloomberg L. (May 14, 2019) (reporting results of a survey of 487 lawyers finding that lawyers have not well utilized useful new tools).

- Moore v. Publicis Groupe, 287 F.R.D. 182, 191 (S.D.N.Y. 2012) (“Computer-assisted review appears to be better than the available alternatives, and thus should be used in appropriate cases.”) Judge Andrew Peck.

- Bob Ambrogi, Latest ABA Technology Survey Provides Insights on E-Discovery Trends, Catalyst: E-Discovery Search Blog (Nov. 10, 2016) (noting that “firms are failing to use advanced e-discovery technologies or even any e-discovery technology”).

- Doug Austin, Announcing the State of the Industry Report 2021, eDiscovery Today (Jan. 5, 2021),

- David C. Blair & M. E. Maron, An Evaluation of Retrieval Effectiveness for a Full-Text Document-Retrieval System, 28 Commc’ns ACM 289 (1985).

- Thomas E. Stevens & Wayne C. Matus, Gaining a Comparative Advantage in the Process, Nat’l L.J. (Aug. 25, 2008) (describing a “general reluctance by counsel to rely on anything but what they perceive to be the most defensible positions in electronic discovery, even if those solutions do not hold up any sort of honest analysis of cost or quality”).

- Rio Tinto PLC v. Vale S.A., 306 F.R.D. 125, 127 (S.D.N.Y. 2015). Judge Andrew Peck.

- See The Sedona Conference, The Sedona Conference Best Practices Commentary on the Use of Search & Information Retrieval Methods in E-Discovery, 15 Sedona Conf. J. 217, 235-26 (2014) (“Some litigators continue to primarily rely upon manual review of information as part of their review process. Principal rationales [include] . . . the perception that there is a lack of scientific validity of search technologies necessary to defend against a court challenge . . . .”).

- Doug Austin, Learning to Trust TAR as Much as Keyword Search: eDiscovery Best Practices, eDiscovery Today (June 28, 2021).

- Robert Ambrogi, Fear Not, Lawyers, AI Is Not Your Enemy, Above Law (Oct. 30, 2017).

Robophobia Article Is A First

Robophobia is the first piece of legal scholarship to address our misjudgment of algorithms head-on. Professor Woods makes this assertion up front and I believe it. The Article catalogs different ways that we now misjudge poor algorithms. The evidence of our robophobia is overwhelming, but, before Professor Woods work, it had all been in silos and was not seriously considered. He is the first to bring it all together and consider the legal implications.

His article goes on to suggest several reforms, also a first. But before I get to that, a more detailed overview is in order. The Article is in six parts. Part I provides several examples of robophobia. Although a long list, he says it is far from exhaustive. Part II distinguishes different types of robophobia. Part III considers potential explanations for robophobia. Part IV makes a strong, balanced case for being wary of machine decision-makers, including our inclination to, in some situations, over rely on machines. Part V outlines the components of his case against robophobia. The concluding Part VI offers “tentative policy prescriptions for encouraging rational thinking – and policy making – when it comes to nonhuman deciders.“

Part II of the Article – Types of Robophobia

Professor Woods identifies five different types of robophobia.

- Elevated Performance Standards: we expect algorithms to greatly outperform the human alternatives and often demand perfection.

- Elevated Process Standards: we demand algorithms explain their decision-making processes clearly and fully; the reasoning must be plain and understandable to human reviewers.

- Harsher Judgments: algorithmic mistakes are routinely judges more severely than human errors. A corollary of elevated performance standards.

- Distrust: our confidence in automated decisions is week and fragile. Would you rather get into an empty AI Uber, or one driven by a scruffy looking human?

- Prioritizing Human Decisions: We must keep “humans in the loop” and give more weight to human input than algorithmic.

Part III – Explaining Robophobia

Professor Woods considers seven different explanations for robophobia.

- Fear of the Unknown

- Transparency Concerns

- Loss of Control

- Job Anxiety

- Disgust

- Gambling for Perfect Decisions

- Overconfidence in Human Decisions

I’m limiting my review here, since the explanations for most of these should be obvious by now and I want to limit the length of my blog. But the disgust explanation was not one I expected and a short quote by Andrew Woods might be helpful, along with the robot photo I added.

[T]he more that robots become humanlike, the more they can trigger feelings of disgust. In the 1970s, roboticist Masahiro Mori hypothesized that people would be more willing to accept robots as the machines became more humanlike, but only up to a point, and then human acceptance of nearly-human robots would decline.[227] This decline has been called the “uncanny valley,” and it has turned out to be a profound insight about how humans react to nonhuman agents. This means that as robots take the place of humans with increasing frequency—companion robots for the elderly, sex robots for the lonely, doctor robots for the sick—reports of robots’ uncanny features will likely increase.

For interesting background on the uncanny valley, see these You Tube videos and experience robot disgust for yourself. Uncanny Valley by Popular Science 2008 (old, but pretty disgusting). Here’s a more recent and detailed one, pretty good, by a popular twenty-something with pink hair. Why is this image creepy? by TUV 2022.

Parts IV and V – The Cases For and Against Robophobia

Part IV lays out all the good reasons to be suspect of delegating decision to algorithms. Part V is the new counter-argument, one we have not heard before, why robophobia is bad for us. This is probably the heart of the article and suggest you read this part for sure.

Here is a good quote at the end of Part IV to put the pro versus anti-robot positions into perspective:

Pro-robot bias is no better than antirobot bias. If we are inclined both to over- and underrely on robots, then we need to correct both problems—the human fear of robots is one piece of the larger puzzle of how robots and humans should coexist. The regulatory challenge vis-à-vis human-robot interactions then is not merely minimizing one problem or the other but rather making a rational assessment of the risks and rewards offered by nonhuman decision-makers. This requires a clear sense of the key variables along which to evaluate decision-makers.

All too human bias and prejudice clouds clear, objective thinking. In the first two paragraphs of Part V of his article Professor Woods deftly summarizes the case against robophobia.

We are irrational in our embrace of technology, which is driven more by intuition than reasoned debate. Sensible policy will only come from a thoughtful and deliberate—and perhaps counterintuitive—approach to integrating robots into our society. This is a point about the policymaking process as much as it is about the policies themselves. And at the moment, we are getting it wrong—most especially with the important policy choice of where to transfer control from a human decider to a robot decider.

Specifically, in most domains, we should accept much more risk from algorithms than we currently do. We should assess their performance comparatively—usually by comparing robots to the human decider they would replace—and we should care about rates of improvement. This means we should embrace robot decision-makers whenever they are better than human decision-makers. We should even embrace robot decision-makers when they are less effective than humans, as long as we have a high level of confidence that they will soon become better than humans. Implicit in this framing is a rejection of deontological claims—some would say a “right”—to having humans do certain tasks instead of robots.[255] But, this is not to say that we should prefer robots to humans in general. Indeed, we must be just as vigilant about the risks of irrationally preferring robots over humans, which can be just as harmful.[256]

The concluding Part Three of my review of Robophobia is coming soon. In the meantime, take a break and think about Professor Woods policy-based perspective. That is something practicing lawyers like me do not do often enough. Also, it is of value to consider Andrew’s reference to “deontology“, not a word previously in my vocabulary. It is a good ethics term to pick up. Thank you Immanuel Kant.

Ralph Losey Copyright 2022 – ALL RIGHTS RESERVED